AWS controllers for Kubernetes

I struggled to find a working (and simple) example on using ACK so I put this together to create an S3 bucket.

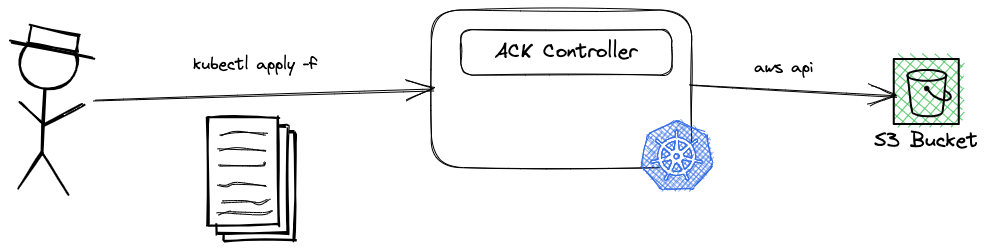

AWS Controllers for Kubernetes (also known as ACK) are built around the Kubernetes extension concepts of Custom Resource and Custom Resource Definitions. You can use ACK to define and use AWS services directly from Kubernetes. This helps you take advantage of managed AWS services for your Kubernetes applications without needing to define resources outside of the cluster.

Say you need to use a AWS S3 Bucket in your application that’s deployed to Kubernetes. Instead of using AWS console, AWS CLI, AWS CloudFormation etc., you can define the AWS S3 Bucket in a YAML manifest file and deploy it using familiar tools such as kubectl. The end goal is to allow users (Software Engineers, DevOps engineers, operators etc.) to use the same interface (Kubernetes API in this case) to describe and manage AWS services along with native Kubernetes resources such as Deployment, Service etc.

Deploy a test cluster with EKS

1

2$ cat cluster.yaml

3

4apiVersion: eksctl.io/v1alpha5

5kind: ClusterConfig

6metadata:

7 name: ack-cluster

8 region: us-east-1

9 version: "1.23"

10iam:

11 withOIDC: true

12

13managedNodeGroups:

14 - name: managed-ng-1

15 minSize: 1

16 maxSize: 2

17 desiredCapacity: 1

18 instanceType: t3.small

19 spot: true

20

21

22$ eksctl create cluster -f cluster.yml

Install the S3 controller

1export SERVICE=s3

2export RELEASE_VERSION=`curl -sL https://api.github.com/repos/aws-controllers-k8s/$SERVICE-controller/releases/latest | grep '"tag_name":' | cut -d'"' -f4`

3export ACK_SYSTEM_NAMESPACE=ack-system

4export AWS_REGION=us-east-1

5

6aws ecr-public get-login-password --region us-east-1 | helm registry login --username AWS --password-stdin public.ecr.aws

7

8helm install --create-namespace -n $ACK_SYSTEM_NAMESPACE ack-$SERVICE-controller \

9 oci://public.ecr.aws/aws-controllers-k8s/$SERVICE-chart --version=$RELEASE_VERSION --set=aws.region=$AWS_REGION

10

11$ kubectl get crd

12

13NAME CREATED AT

14buckets.s3.services.k8s.aws 2022-12-15T11:05:35Z

Add the IAM Permissions to the POD

1export SERVICE=s3

2export ACK_K8S_SERVICE_ACCOUNT_NAME=ack-$SERVICE-controller

3

4export ACK_SYSTEM_NAMESPACE=ack-system

5export EKS_CLUSTER_NAME=ack-cluster

6export POLICY_ARN=arn:aws:iam::aws:policy/AmazonS3FullAccess

7

8# IAM role has a format - do not change it. you can't use any arbitrary name

9export IAM_ROLE_NAME=ack-$SERVICE-controller-role

10

11eksctl create iamserviceaccount \

12 --name $ACK_K8S_SERVICE_ACCOUNT_NAME \

13 --namespace $ACK_SYSTEM_NAMESPACE \

14 --cluster $EKS_CLUSTER_NAME \

15 --role-name $IAM_ROLE_NAME \

16 --attach-policy-arn $POLICY_ARN \

17 --approve \

18 --override-existing-serviceaccounts

and restart the controller pod to get the new IAM permissions

1$ kubectl get deployments -n $ACK_SYSTEM_NAMESPACE

2

3NAME READY UP-TO-DATE AVAILABLE AGE

4ack-s3-controller-s3-chart 1/1 1 1 7m1s

5

6$ kubectl -n $ACK_SYSTEM_NAMESPACE rollout restart deployment ack-s3-controller-s3-chart

7deployment.apps/ack-s3-controller-s3-chart restarted

8

9$ kubectl get pods -n $ACK_SYSTEM_NAMESPACE

10NAME READY STATUS RESTARTS AGE

11ack-s3-controller-s3-chart-87c8bbfbc-fjjfk 1/1 Running 0 5m33s

12

13$ kubectl describe pod -n $ACK_SYSTEM_NAMESPACE ack-s3-controller-s3-chart-87c8bbfbc-fjjfk | grep "^\s*AWS_"

14 AWS_REGION: us-east-1

15 AWS_ENDPOINT_URL:

16 AWS_STS_REGIONAL_ENDPOINTS: regional

17 AWS_ROLE_ARN: arn:aws:iam::484235524795:role/ack-s3-controller-role

18 AWS_WEB_IDENTITY_TOKEN_FILE: /var/run/secrets/eks.amazonaws.com/serviceaccount/token

Deploy the S3 bucket

1

2$ cat bucket.yml

3apiVersion: s3.services.k8s.aws/v1alpha1

4kind: Bucket

5metadata:

6 name: my-ack-s3-bucket-123456789

7spec:

8 name: my-ack-s3-bucket-123456789

9 tagging:

10 tagSet:

11 - key: myTagKey

12 value: myTagValue

13

14$ kubectl apply -f bucket.yml

15

16$ kubectl get bucket

17NAME AGE

18my-ack-s3-bucket-123456789 26m

19

20$ kubectl describe bucket my-ack-s3-bucket-123456789

21Name: my-ack-s3-bucket-123456789

22Namespace: default

23Labels: <none>

24Annotations: <none>

25API Version: s3.services.k8s.aws/v1alpha1

26Kind: Bucket

27Metadata:

28 Creation Timestamp: 2022-12-15T11:19:20Z

29 Finalizers:

30 finalizers.s3.services.k8s.aws/Bucket

31 Generation: 1

32 Managed Fields:

33 API Version: s3.services.k8s.aws/v1alpha1

34 Fields Type: FieldsV1

35 fieldsV1:

36 f:metadata:

37 f:annotations:

38 .:

39 f:kubectl.kubernetes.io/last-applied-configuration:

40 f:spec:

41 .:

42 f:name:

43 f:tagging:

44 .:

45 f:tagSet:

46 Manager: kubectl-client-side-apply

47 Operation: Update

48 Time: 2022-12-15T11:19:20Z

49 API Version: s3.services.k8s.aws/v1alpha1

50 Fields Type: FieldsV1

51 fieldsV1:

52 f:metadata:

53 f:finalizers:

54 .:

55 v:"finalizers.s3.services.k8s.aws/Bucket":

56 Manager: controller

57 Operation: Update

58 Time: 2022-12-15T11:19:21Z

59 API Version: s3.services.k8s.aws/v1alpha1

60 Fields Type: FieldsV1

61 fieldsV1:

62 f:status:

63 .:

64 f:ackResourceMetadata:

65 .:

66 f:ownerAccountID:

67 f:region:

68 f:conditions:

69 f:location:

70 Manager: controller

71 Operation: Update

72 Subresource: status

73 Time: 2022-12-15T11:19:21Z

74 Resource Version: 8095

75 UID: d973c580-d5ba-4a8c-a1cc-fdb3e2128f6d

76Spec:

77 Name: my-ack-s3-bucket-123456789

78 Tagging:

79 Tag Set:

80 Key: myTagKey

81 Value: myTagValue

82Status:

83 Ack Resource Metadata:

84 Owner Account ID: 484235524795

85 Region: us-east-1

86 Conditions:

87 Last Transition Time: 2022-12-15T11:19:21Z

88 Message: Resource synced successfully

89 Reason:

90 Status: True

91 Type: ACK.ResourceSynced

92 Location: /my-ack-s3-bucket-123456789

93Events: <none>

Delete the bucket and the cluster

1

2kubectl delete -f bucket.yml

3

4exkctl delete cluster -f cluster.yml